Acknowledgements: The Aselo Team, Annalise Irby, Nick Hurlburt, Dee Luo

Key Points

- AI summaries of helpline conversations are making counsellor workflows faster, reducing admin work and cutting repetitive documentation.

- Counsellors and supervisors find the summaries useful, because they are accurate, structured, and consistent.

- Classification experiments show that understanding counsellor workflows matters more than model accuracy alone.

- Globally-relevant AI advances can be accomplished without compromising trustworthy data practices.

Every day, helpline counsellors handle emotionally heavy conversations, sometimes lasting hours. Each conversation must be documented: writing summaries, selecting issue categories, filling out form fields, and preparing reports for supervisors or case managers.

Counsellors’ documentation, which we call helpline data entry, is critical. It protects callers, supports data quality and supervision, and surfaces trends across regions and countries. It is also time-consuming and mentally demanding, leaving counsellors less time for actual counselling.

We built Aselo, our open source contact center for these helplines to add text channels to traditionally voice helplines and to improve productivity of the counselors. One of the main areas for productivity improvements is reducing the time counselors spent on helpline data entry.

With generative AI tools, we saw an opportunity to do more: could we design AI tools that make counsellors’ work easier, faster, and more consistent, while preserving trust, privacy, and counsellor agency?

We focused on two features that lay the foundation for a fully automated Counsellor Data Entry Assistant.

- Summarization, which supports counsellors with structured, accurate summaries of a conversation.

- Issue categorization, which explores how AI can help streamline or automate the selection of presenting issues.

When we began building AI into Aselo, our guiding principle was to amplify counsellors’ work without compromising trust, data protection, or human judgment.

In this post, I share three key learnings from building AI tools for helplines, spanning data stewardship, human-in-the-loop summarization, and the limits of automated classification over large label sets. They reflect the technical challenges, the human-centered design decisions, and the operational principles that shaped our approach to responsible AI in helpline services.

Learning 1: Protecting Sensitive Data Builds Trust and Enables Innovation

Rather than limiting innovation, privacy and protection policies create a foundation for trustworthy collaboration and safe experimentation.

Working with sensitive helpline data means earning trust through clear, practical safeguards. One of Aselo’s founding principles was that helplines own their data. True to that principle, this AI work only included helplines who explicitly opted in. We were transparent about how their data would be used and who would have access. We adopted policies from the beginning that center data protection and privacy:

- Personally Identifiable Information (PII) redaction, which removes identifying details before any experimentation occurs.

- Zero data retention, meaning no conversational data is stored by models or model providers.

- Aselo’s already secure data infrastructure underpins all work.

With these safeguards in place, we could prototype AI data entry features such as summarization and issue categorization on small datasets. This approach gave partners confidence to collaborate, knowing that no conversational data would be stored or repurposed by third party service providers. OpenAI supported zero data retention for their models, enabling experimentation without retaining any data.

High-performing entity recognition tools, combined with trust built from careful data stewardship, enabled experimentation that preserved privacy. This approach protected sensitive data but also established the foundation for trustworthy AI tools that augment counsellor workflows responsibly.

Learning 2: AI Helps Write Summaries Faster While Preserving Counsellors’ Agency

LLM-assisted summaries can speed up data entry and support human judgment along with consistent documentation.

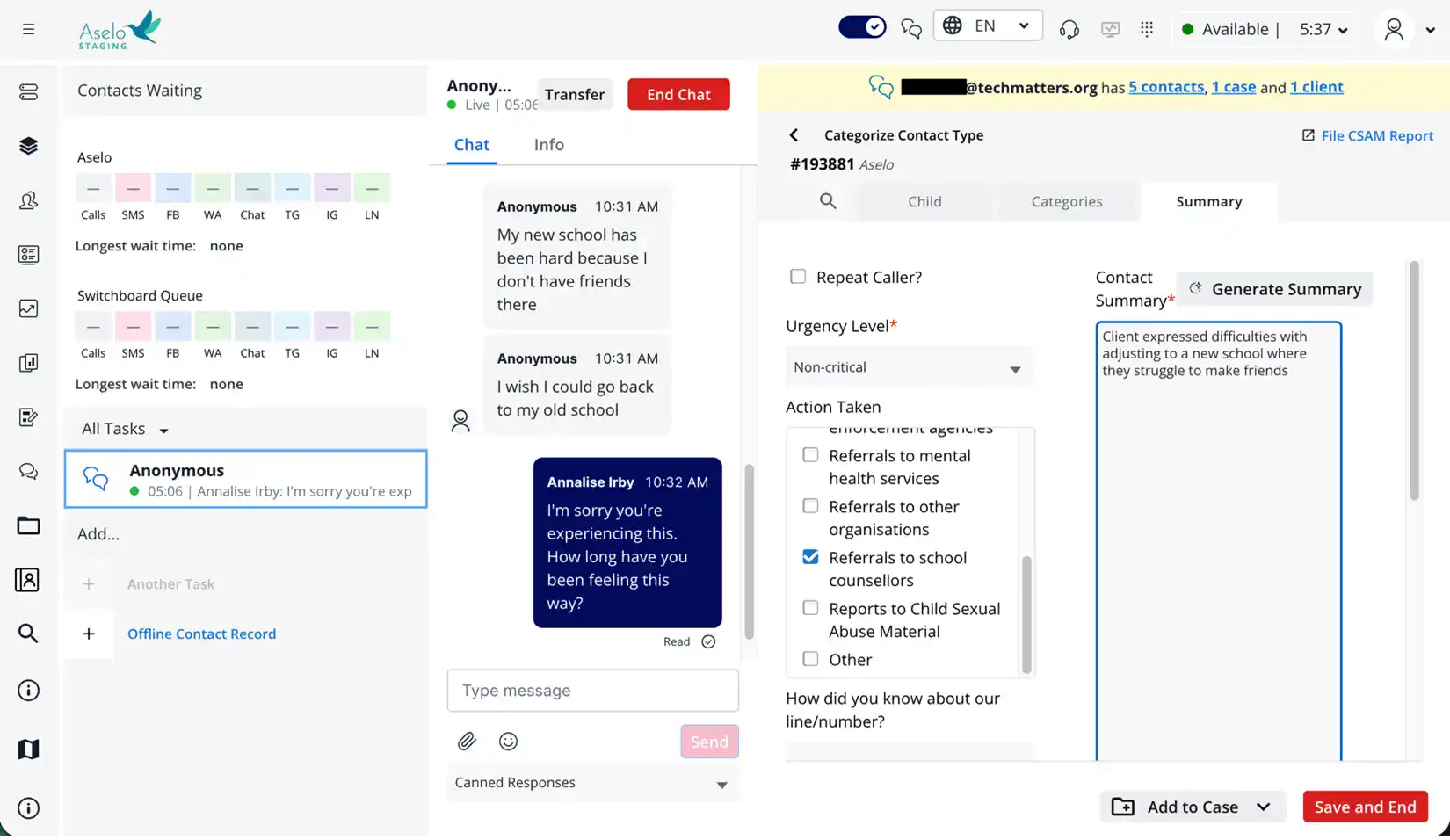

Following Aselo’s co-creation approach, we designed AI with counsellors in the loop, ensuring that automation supports helplines’ data entry needs.

AI Summaries Prototype

Before development, we ran a prototyping pilot that tested four types of summaries. These ranged from short, concise versions to longer, structured formats similar to formats used in training documents by helplines. We evaluated them using BLEU and ROUGE scores, LLM evaluators, and direct human ratings for accuracy and usefulness.

Longer and more structured summaries were preferred by human raters and by LLM-as-judge evaluators, showing early alignment between the two. They provided the clearest understanding of what happened during the contact. I also revisited the literature on evaluation metrics for natural language generation tasks like summarization, and spent time, for example, on Ehud Reiter’s blog reading about the current state of NLG evaluation as well as LLM-based evaluation methods (and shortcomings). From there, I developed helpline-specific indicators for quality and usefulness.

How Co-Design Shaped the Feature

We listened to counsellors and supervisors to understand how summaries fit within their workflow. They told us that they needed

- summaries that are transparent and editable,

- support for long and trauma-heavy conversations,

- and reduction in time spend reviewing and correcting summaries.

Based on this feedback, we created a one-click summarization tool that produces an editable three-paragraph summary directly within Aselo. This feedback informed design choices such as keeping counsellors in the loop, ensuring transparency, and embedding AI features securely.

Early Impact in the Beta Test

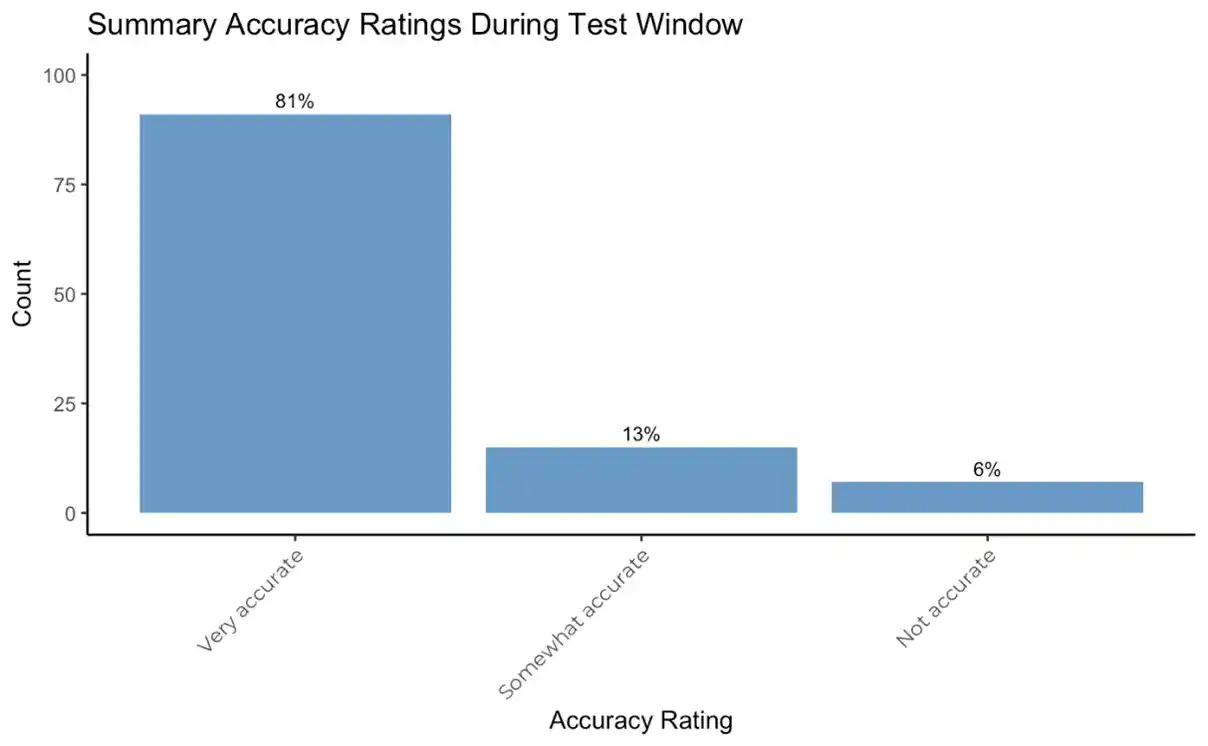

We evaluated the feature with a mixed methods approach. We tracked accuracy ratings, edits to summaries, user engagement, supervisor feedback, and wrap-up times (time spent entering data after a call ends). We adapted the four-tiered AI evaluation system developed by The Agency Fund and the Center for Global Development to track technical and human-centered metrics.

What happened? Here is what we observed:

- Counsellors rated AI-supported summaries as highly accurate and more standardized across their peers.

- Supervisors noticed that the format, structure, and quality of summaries became more consistent across their workforce of counsellors.

- Counsellors edited some of the AI-generated summaries, which showed active engagement, but often used them largely unchanged.

- And most importantly, we saw more chats with short wrap-up times.

When counsellors marked a summary as Not Accurate, it was usually because the conversation was very brief, and a three-paragraph summary didn’t make sense. In response to this feedback, our next version of the feature has taken the length of the interaction into account to generate appropriate summaries.

These findings are early indicators that the feature is meaningfully augmenting counsellors’ work, maintaining human judgment and accuracy while improving efficiency.

Chart: Summary Accuracy Ratings During Test Window

Around 60% of AI-generated summaries were rated by Counsellors during the Beta Test window, and of these, most were rated Very accurate (81%).

Call wrap-up times chart

There was a higher proportion of chats with wrap-up times under 30 seconds when Counsellors used the AI feature, and a fewer proportion of chat wrap-ups that took 2-60 minutes (a small, but statistically significant, effect).

Learning 3: Classification Is a Counsellor Workflow Challenge, Not Just a Model Challenge

Task definition shapes model performance, and human workflow shapes task definition.

Our initial focus on using generative AI technology was helpline issue categorization. This seemed like a straightforward classification problem: pick the top three issues that came up in a conversation from a list of topics. We thought this would translate to significant productivity improvements, but this proved more challenging than expected.

In practice, counsellors handle hundreds of live calls each week, and most use a small set of familiar categories. Remembering definitions and navigating long lists while keeping up with emerging issue trends is the real challenge.

What we tested

We ran zero-shot and few-shot experiments with GPT-4o-mini using fully redacted transcripts. We compared the results to a baseline that always selected the three most common labels. The model outperformed the baseline for two of three helplines, and performance improved further when we grouped labels into broader themes.

On paper, this looked like progress. Even simple AI-assisted classification improved accuracy while reducing cognitive load. Yet the results did not fully align with what counsellors needed. The technical improvements revealed that prediction alone was not enough to make the process truly useful.

What counsellors taught us was reflected in data

I had assumed that the issue classification task was cognitively demanding. For example, one helpline uses 163 possible categories, and counsellors choose up to three issue categories for each contact. But counsellors shared something that surprised us. They do not find the 163-category list overwhelming; they mostly use a small set of familiar categories.

The real difficulty comes from remembering the definitions of similar or overlapping labels and navigating long lists required for reporting.

The data confirmed this pattern. Across helplines, roughly two-thirds of labels came from the ten most frequently used categories, and the remaining categories (up to 150) formed a long tail and are rarely used.

A promising direction

Helplines explained that they sometimes create new issue categories after noticing patterns in free-text summaries during data reviews. This highlighted a promising direction for AI: supporting the evolution of category sets by recognizing issues emerging from new summaries. Our focus shifted from predicting issue categories to understanding how these categories evolve, and considering how to design tools that support that evolution. The next phase for categorization efforts pauses AI experimentation to focus on co-designing categorization approaches with helplines, grounded in counsellors’ actual workflows. AI isn’t the most essential tool for this challenge, but it did help us discover what is essential: in this case, strengthening data collection practices.

Looking Ahead: Counsellor AI Assistant that Scales

Our next phase involves scaling the Counsellor AI Assistant across the entire data entry and case management workflow. This includes exploring automated entry of form fields while continuing to strengthen secure data pipelines and test AI models hosted fully within our infrastructure.

What we have found is that the most valuable AI features are those that assist counsellors in their workflow, promote agency about which data is recorded, and maintain transparency at every step. By embedding safeguards, incorporating human feedback, and continually evaluating outcomes, we can scale AI data entry responsibly while preserving counsellor agency and the quality of their interactions with children and families.

This direction aligns with Aselo’s broader strategy to strengthen the helpline ecosystem across four goals: improving counsellor experience, enhancing data quality, expanding insight into caller’s needs, and increasing global reach. To us, responsible AI in helplines is not about replacing people. It is about amplifying their impact, ensuring that every call is well-documented, every counsellor is supported, and that the system is built to be effective for people to spend time supporting people in crisis.

We would like to especially thank Safe Online and The Agency Fund for their financial support of this project, and would like to thank OpenAI for agreeing to zero data retention and the provision of in-kind credits.